Azure DevOps: Your own on-demand agents in your corporate network

An end-to-end solution to run containerized Azure DevOps agents in your corporate network, anyone? You will be able to use Azure DevOps Services to manage the deployment of your applications and services even in secure areas of your corporate network.

In my previous articles, i had touched on the subject (Azure DevOps: Creating your own agentless task and Azure Batch, the unloved). In this article i propose to detail how to put all this in place.

Why do it?

- If you want to use Azure DevOps Services to deploy your applications and services in your network-isolated corporate.

- If you want to use containers for your build and/or deployment operations to avoid side effects related to previous CI/CD pipeline executions.

- If you want to reduce the time of build and deployment of your pipelines by pre-installing your frameworks and legacy tools on your agents.

Architecture

I suggest you orchestrate your Azure DevOps agents with Azure Batch. The entire architecture will therefore be on Azure. Of course, this is a proposal, it is certain that you can achieve an equivalent architecture on another cloud host or on your private cloud.

Concretely, here is the sequencing that will occur for the provision of an ephemeral agent:

- An agentless invoke Azure Function task from the build/deployment Azure DevOps pipeline calls the ProvideAzDOAgent function to request an ephemeral agent,

- For each call, the Azure Function creates a task in Azure Batch Account,

- The Azure Batch Account processes the tasks and assigns the task to the correct pool of nodes,

- The pool of nodes instantiates a container from the image present in the Azure Container Registry,

- Once the container is instantiated and started, it registers with Azure DevOps to let it know that it is available. Azure DevOps then assigns him a pending job.

- The Azure DevOps job runs in the Virtual Network A and can communicate with the On Premise network.

We can see that the Azure Batch service (and its pools) as well as Azure Container Registry are completely isolated from a network point of view. Only the Azure Function is publicly accessible. However, the latter has a firewall rule limiting calls to public IP ranges of Azure DevOps Services.

Note

Microsoft provides the IP ranges of the Azure DevOps service for each of the regions: here

Update of 05/02/2022

When posting this article, i forgot an important part of securing this solution. Indeed, in the first version the nodes of the Azure Batch pools had a public IP address which allowed access through the RDP protocols for Windows and SSH for Linux. My colleague, Etienne Louise, pointed it out to me and pre-cheated me by giving me the solution directly. For it :

- When creating the pool, you must indicate that you do not want to create a public IP cf. Microsoft documentation,

- You must add the Azure NAT Gateway service connected to the pools subnet. This will allow our nodes to be able to access the internet to register with Azure DevOps.

All that remains is to deploy our infrastructure on Azure.

Infrastructure

You will find below the ARM template allowing to quickly deploy the infrastructure.

This ARM template provides 2 pools of nodes: One for Azure DevOps agents on Windows and another for Linux agents.

Note

Since Windows containers can only run on Windows OS, we have no choice but to provision pools with separate OS.

Update of 05/02/2022

The infrastructure available above takes into account the remarks of Etienne Louise.

Auto-scale

Azure Batch offers an auto-scaling system for nodes in a pool. In our case, we are going to use it to avoid running VMs on Azure for several hours without build or deployment operations. For each of these pools, I added the auto-scaling script below:

$nbTaskPerNodes = $TaskSlotsPerNode;

$currentNodes = $TargetLowPriorityNodes;

$nbPending5min = $PendingTasks.GetSamplePercent(TimeInterval_Minute * 5) < 70 ? max($PendingTasks.GetSample(1)) : max($PendingTasks.GetSample(TimeInterval_Minute * 5));

$nbPending60min = $PendingTasks.GetSamplePercent(TimeInterval_Minute * 60) < 70 ? 1000 : max($PendingTasks.GetSample(TimeInterval_Minute * 60));

$totalLowPriorityNodes = $nbPending5min > max(0, $TaskSlotsPerNode * $currentNodes) ? $currentNodes + 1 : $currentNodes;

$totalLowPriorityNodes = $nbPending60min <= $TaskSlotsPerNode * max(0, $currentNodes - 1) ? $currentNodes - 1 : $totalLowPriorityNodes;

$totalLowPriorityNodes = min(4, max($totalLowPriorityNodes, 0));

$TargetLowPriorityNodes = $totalLowPriorityNodes;

$NodeDeallocationOption = taskcompletion;

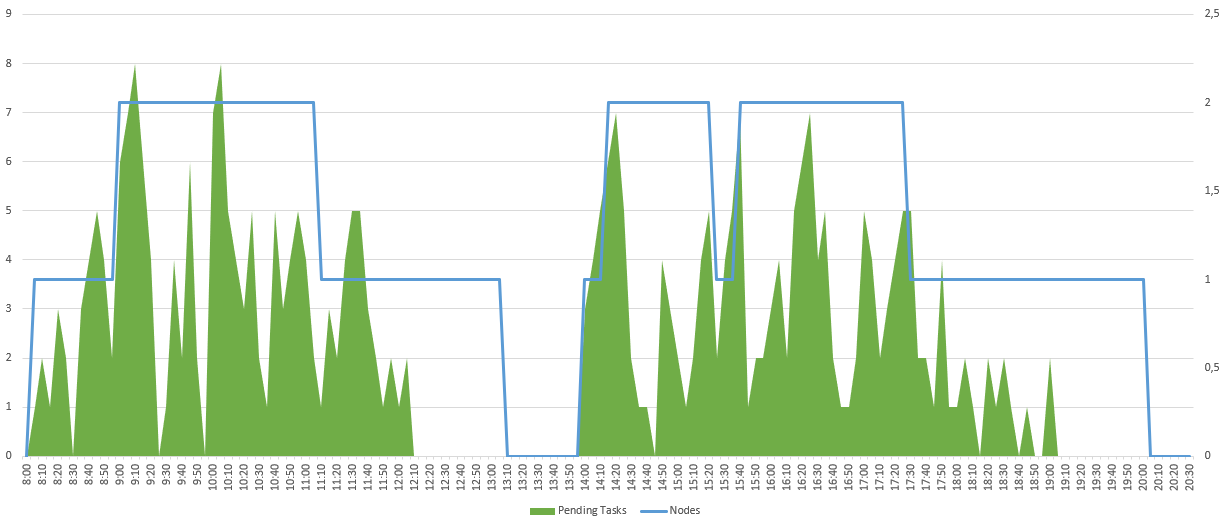

This script will evaluate the number of tasks in progress or pending:

- If this is greater than the number of nodes multiplied by the number of parallel tasks, a new node will be added.

- If this is less than the number of nodes - 1 multiplied by the number of parallel tasks, a node will be freed.

The Scale-Out (addition of a node) is evaluated over the last 5 minutes in order to be as responsive as possible in the event of an increase in the number of tasks. The Scale-In (deletion of a node) is evaluated over the last hour in order to be able to manage occasional drops in tasks.

Here is an example of what the activity could give on a day:

Limitations

However, you should know that there are some small limitations:

- Although you can deploy your Azure Batch pools with ARM, it is not possible to increment the pool infrastructure. In other words, you cannot modify an existing pool. And, it's even worse since you'll get an error if your pool already exists when your ARM template runs. To compensate for this limitation, I added 2 parameters "create_WindowsBatchPool" and "create_UbuntuBatchPool".

- When creating your container registry, it does not contain any images. It will therefore be necessary to provide for the import of the images of your Azure DevOps agents.

For this second limitation, I suggest you study the containerization of our Azure DevOps agent.

Containerize your Azure DevOps agent

Browsing through dockerhub looking for a docker image of my Azure DevOps agent, I found Czon. His project consists of automating the construction of docker images in order to systematically embed the latest version of the Azure DevOps agent. Thus on its repository dockerhub, Czon shares ubuntu images with the latest version of the Azure DevOps agent. That's class!

So I took the liberty of forking his Github repository to make some small changes to the already excellent work of Czon and add the following features:

- Creation of containers on the bases

Windows ltsc2019,Ubuntu 18.04andUbuntu 20.04, - And for each of these OS, addition of

dotnet core 3.1anddotnet 6.0frameworks

You can find my modifications on my repository Github, and the generated images on dockerhub.

All that remains is to import the images from dockerhub to your Azure Container Registry with the following Azure CLI commands:

az acr import -n [YOUR_ACR_NAME] --source docker.io/pmorisseau/azdo-agent:ubuntu-20.04-azdo -t azdo-agent:ubuntu-20.04-azdo

az acr import -n [YOUR_ACR_NAME] --source docker.io/pmorisseau/azdo-agent:ubuntu-20.04-azdo -t azdo-agent:ubuntu-18.04-azdo

az acr import -n [YOUR_ACR_NAME] --source docker.io/pmorisseau/azdo-agent:windows-core-ltsc2019-azdo -t azdo-agent:windows-core-ltsc2019-azdo

Now let's go to programming the function that will generate the tasks in Azure Batch.

An Azure function to drive Azure Batch

Our goal is to allow the creation of a task running our containerized image in Azure Batch via the HTTP request of an Azure function.

As a reminder, a task must be executed in a job. So if the job does not exist, it will have to be created. Our job will also allow us to define the environment variables of our containerized agent. In our case, we need to set as environment variables:

- The url of our organization Azure DevOps,

- The personal access token to allow our agent to authenticate on the organization,

- The name of the pool in which our agent will be added,

- The top indicating if the agent should run only one Azure DevOps job.

We will use .net 6.0 to program our Azure Function. You will find here the source code created for the occasion.

Today, only personal token authentication to add a Self-Hosted agent in a pool on Azure DevOps is possible. It's not ideal. In effect :

- This one having a validity period limited in time, this implies that you will have to intervene regularly to be able to renew it.

- This one being linked to an Azure DevOps user, if he leaves then his PAT will be revoked. A new token will then have to be generated.

Note

Microsoft provides the procedure for creating a PAT for your Azure DevOps agent: here

Here is the necessary configuration for our Azure Function:

| Name | Description |

|---|---|

| BatchAccountUrl | Url of Azure Batch service |

| BatchAccountName | Name of Azure Batch service |

| BatchAccountKey | Access key of Azure Batch service |

| ContainerRegistryServer | full name of Azure Container Registry service |

| AzDOUrl | Url of our Azure DevOps organization |

| AzDOToken | Personal access token allowing our agent to authenticate on the organization |

| AzDOUbuntuPool | Pool name containing agents running on Ubuntu |

| AzDOWindowsPool | Pool name containing agents running on Windows |

Integration with Azure DevOps

Now that we have our solution operational on Azure, we need to allow our Azure DevOps pipelines to request an agent and use it when available.

First of all, you need to create the pools of agents on Azure DevOps. This document from Microsoft explains the procedure.

Next, we will add in our pipeline an Agentless or Server-side job with an invoke Azure Function task. This task must perform an HTTP POST request.

Below is an example yaml pipeline using this task.

jobs:

- job: RequestAzDOAgent

pool: server

steps:

- task: AzureFunction@1

displayName: 'Request new ephemeral agent'

inputs:

function: 'https://[YOUR_FUNCTION_NAME].azurewebsites.net/api/ProvideAzDOAgent'

key: '[YOUR_FUNCTION_KEY]'

body: |

{

"AgentOSType":"ubuntu-latest"

}

- job: RunWithEphemeralAgent

dependsOn: RequestAzDOAgent

pool:

name: '[AzDOUbuntuPool]'

steps:

- bash:

displayName: 'Bash Script'

Note

The YOUR_FUNCTION_NAME and YOUR_FUNCTION_KEY attributes will have been previously retrieved from your Azure Function.

The AzDOUbuntuPool attribute corresponds to the name of the pool defined in Azure DevOps

And There you go !!!

Well no, almost...

Indeed, when you launch your pipeline, it will result in a systematic failure indicating that there is no agent in your pool. Indeed, between the moment when you are going to make your agent request and the moment when it will be available, a few seconds or a few minutes may pass (if it is the first execution of the day for example). Either way, Azure DevOps will automatically launch the next job that is supposed to run on your ephemeral agent. Finding no agents, the Azure DevOps pipeline incorrectly assumes that there will never be any agents in this pool and fails.

But then how do we tell our pipeline instance to wait?

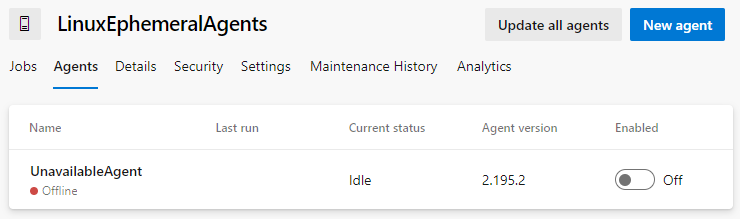

My solution is to register a "dummy" agent in the pool. To do this, nothing could be simpler, just follow the procedure for installing a Self-Hosted agent, then uninstall it without unregistering it. Thus, you keep an Offline agent in your pool. This will be enough to make Azure DevOps wait until your ephemeral agent is available.

It's ugly, but it works!

Conclusion

Through this article I have tried to show you several points:

- That you can use managed services as an enabler of your software production platform,

- That you can secure your software deployments even with cloud solutions,

- That you can set up sober build and deployment solutions by containerizing the framework and tools you need,

- That you can easily integrate it with Azure DevOps.

Not everything is perfect, and I would have preferred:

- That the pools of Azure Batch are better managed by Azure Resource Manager,

- That we can register Azure DevOps agents without using a Personal access token or,

- That we don't have to create a "fictitious" agent in Azure DevOps.

But, I hope I have convinced you of my approach. And if you are interested, I invite you to appropriate what I have produced in the context of this article:

- The Czon Github fork allowing to build images with the AzDO agent

- My repository dockerhub containing the images with the latest version of the AzDO agent

- My repository Github containing the whole solution (Infra + Code + AzDO Pipeline)

And what about Github runners? We will see that in a future article...

References

- Azure DevOps Services : Inbound connections

- Github Czon : Azure Pipelines Agent Docker Container

- Docker hub Czon : AzDO agent

- Azure DevOps : Authenticate with a personal access token (PAT)

- Azure DevOps : Create and manage agent pools

Thanks

- David Dubourg : for proofreading

- Quentin Joseph : for proofreading

- Fabrice Weinling : for proofreading

- Etienne Louise : for the remark and the solution with the NAT Gateway

Written by Philippe MORISSEAU, Published on January 25, 2022. Updated on February 5, 2022